Create Multilingual Avatar Videos in 1 Min with 'Enhancor' AI Lipsync

TItle

Become a Global YouTuber from Your Room! Create Multilingual Avatar Videos in 1 Min with 'Enhancor' AI Lipsync 👄🌍

Introduction

Hello, creators! Welcome to a2set's AI Tutorial.

While running your YouTube, TikTok, or Reels channels, have you ever thought: "I feel burdened showing my real face; I wish a beautiful AI avatar could speak naturally in front of the camera instead," or "I want to make a Short where my original character speaks fluently like a native English speaker."

In the past, this required immense costs and technical skills, such as 3D character rigging, motion capture equipment, and manual lip-syncing. However, using the powerful 'Lipsyncing' feature of 'Enhancor'—the AI platform that surprised us with cinematic CF generation in our last tutorial—all of this can be solved in just 1 minute.

Especially in the latest version we're covering today, it doesn't just make the lips move. It breathes life into a single still photo, generating a video where the character stands against a beautiful background, naturally looking at the camera and speaking, while flawlessly overlaying perfect lip-sync. Let's dive right in!

🚨 [Mandatory Guide: Lipsync Quality & Pricing Plan Check] Lipsync technology, which analyzes lip movements frame-by-frame and dynamically generates high-definition video, requires highly advanced AI computation. To achieve perfect high-resolution processing and commercial use without watermarks, we strongly recommend subscribing to the 'Creator' plan ($45/mo).

Step 1: Project Prep - Prepare the Face and Voice

Before diving into lipsyncing, you only need two ingredients:

Face Source (Image): An original character photo whose lip movements you want to change. You can generate this photo directly in Enhancor, or it can be a photo created on any other platform like Midjourney or Stable Diffusion! Please prepare a clear photo showing a frontal or semi-profile view.

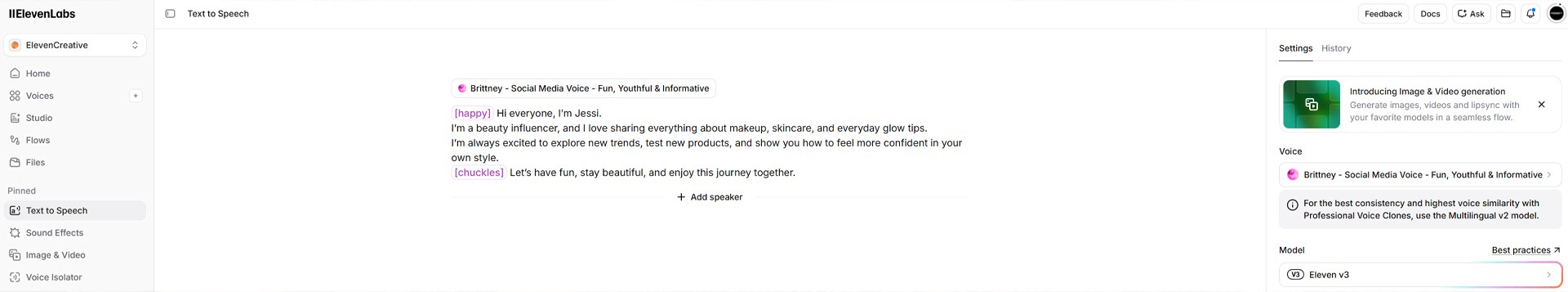

Voice Source (Audio): The new voice file (.mp3, .wav) you want the character to speak with. You can record your own voice, or use a high-quality English/multilingual audio file generated by AI voice actors like ElevenLabs.

Step 2: Access the Lipsyncing Tab & Set Up Image/Prompt 🎙️

Once your ingredients are ready, head over to the Enhancor platform to start directing. You can completely forget about complex video editing tools.

Go to Enhancor (enhancor.com) and enter the [Video Generator] (or Apps) from the top menu.

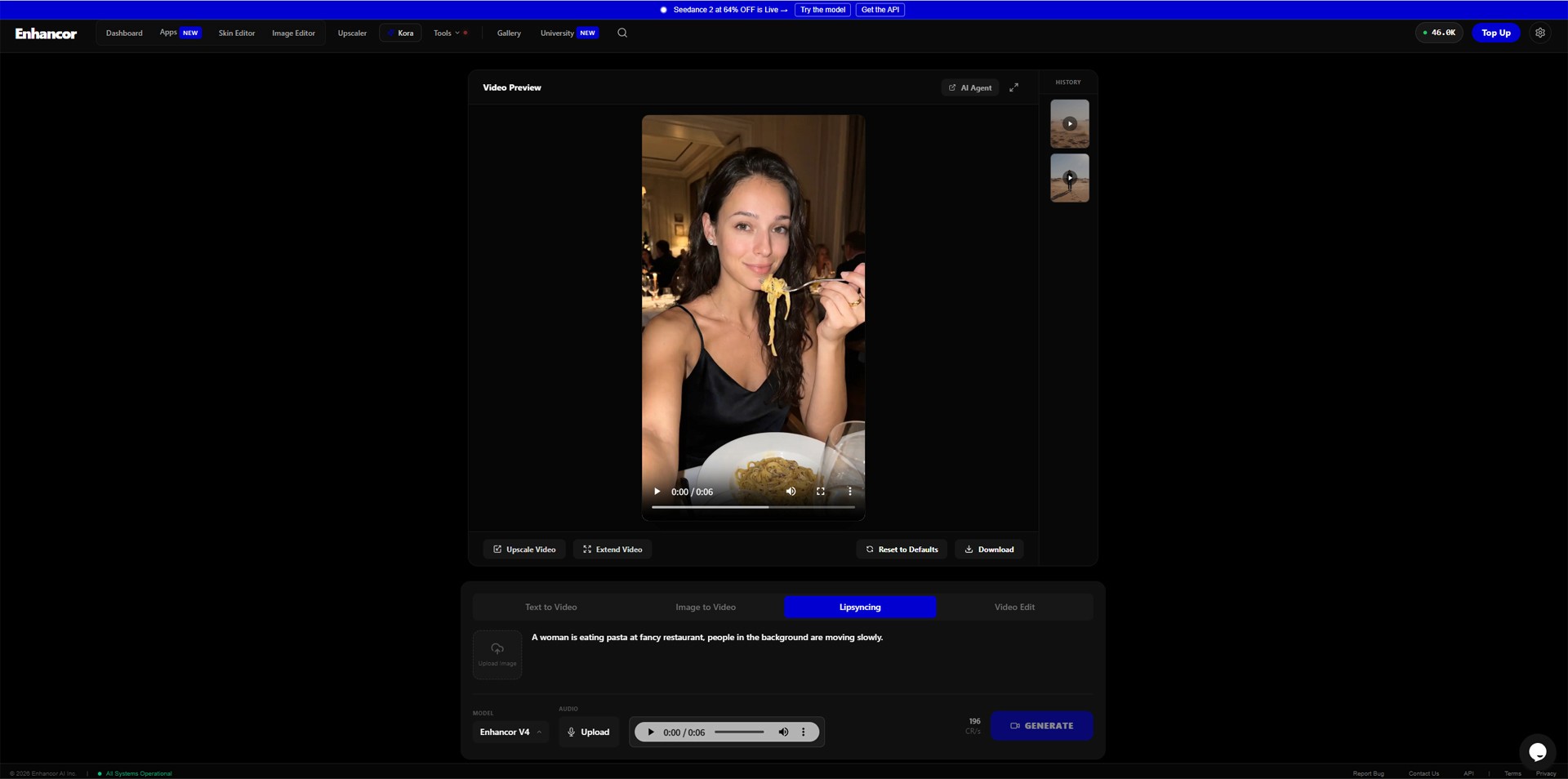

Among the various tabs at the bottom of the screen (Text to Video, Image to Video, etc.), click the

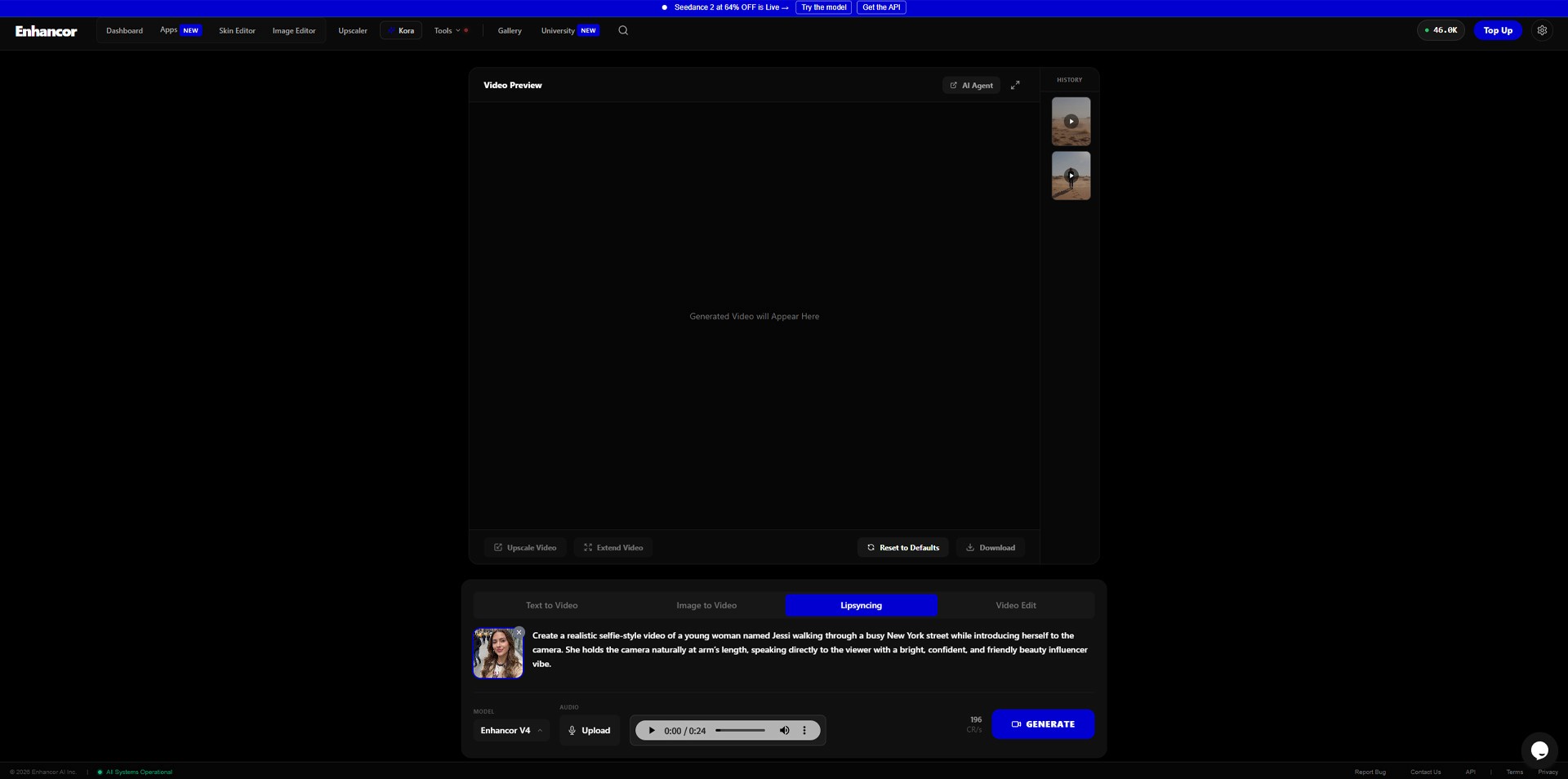

Lipsyncingtab.Click the Image Upload Icon located to the left of the prompt input box, and upload the 'Face Source Photo' you prepared in Step 1.

Now, you need to instruct the prompt box 'in what situation and how this still photo should speak and move'. Copy and paste the practical prompt below exactly as it is.

[Practical Lipsync Directing Prompt (For Copy & Paste)]

💡 a2set Pro Tip: For lipsync videos, rather than dynamic movements where the character walks or runs around, the AI tracks facial landmarks much better when in a 'standing position looking at the camera with natural expressions' as described in the prompt above. This ensures you get results where the lip movements and facial details come to life with chilling perfection!

Step 3: Select Model, Upload Audio & Generate ⚙️

Once you've set the mood of the video, it's finally time to overlay the voice and select the AI model version.

MODEL Setup: Click the

MODELdropdown below the prompt box and select [Enhancor V4], which integrates the latest lipsync technology.AUDIO Upload: Click the

AUDIO [Upload]button (or microphone icon) right next to the model setup to upload the voice file (.mp3, .wav) you prepared in Step 1. An audio playback bar will appear once the upload is complete.After confirming all settings are perfect, firmly click the blue [GENERATE] button at the bottom right!

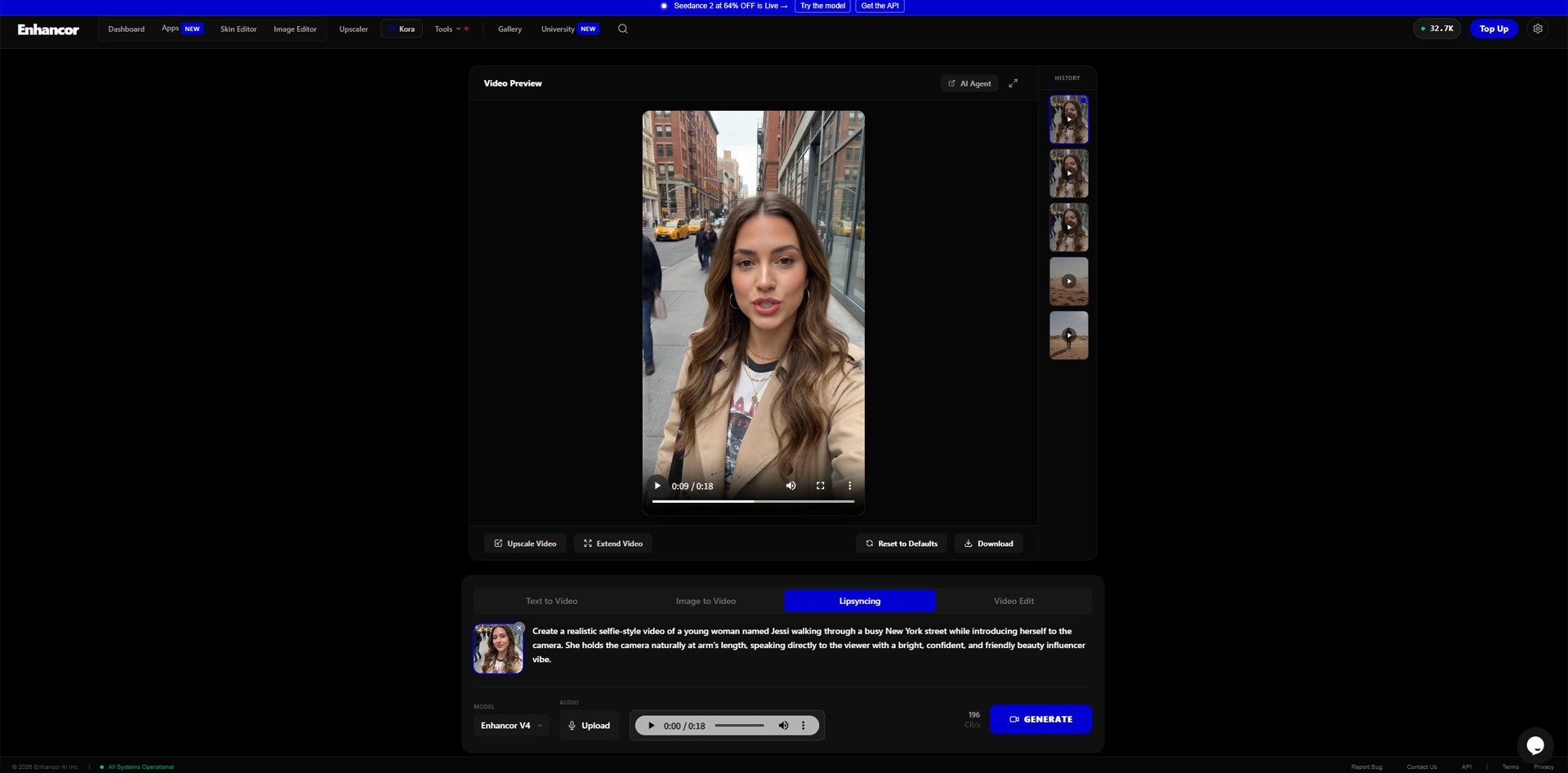

Now, based on your uploaded still image, the AI will generate a sensational vlog-style video of the character standing naturally against a New York backdrop. Simultaneously, it will analyze the waveform and pronunciation of your uploaded audio to redraw the lip muscle movements frame-by-frame.

Step 4: Check the Magical Results & Go Global! 🚀

After a short while, play (▶️) the fully rendered video in the [Video Preview] screen at the top.

The results will be shocking. The still AI image comes to life in a stable selfie angle, blinking and subtly nodding, reborn as a native influencer pronouncing English perfectly in sync with the uploaded audio.

It doesn't just flap its lips; the details of the lower lip biting during tricky English pronunciations like 'F' or 'Th', and the subtle movements of the jawline matching the breath, are perfectly synchronized.

Click the [Download] button at the bottom right to save this incredible video to your PC!

Conclusion

'Visual Dubbing' technology, which used to cost hundreds of thousands of dollars to produce for famous Hollywood movies or Netflix original series, has now landed right on the desks of individual creators via Enhancor AI.

Now, you can create content targeting viewers worldwide without language barriers or filming constraints. Just take an attractive avatar photo generated by Midjourney in your room, drop it into Enhancor along with AI-translated Spanish, Japanese, or Arabic audio files, and hit generate. In an instant, a 'Global Virtual YouTuber fluent in 5 languages' is born.

AI is no longer just a fascinating toy; it is the most powerful weapon to expand your content business globally. Pull out a photo from your album right now and start your global lipsync test!

We will return in the next a2set tutorial with more innovative AI workflows that will save you time and money.