Google DeepMind's Shocking Reveal of Project Genie

Title

A Single Sketch Becomes a 'Playable Game'… Google DeepMind's Shocking Reveal of 'Project Genie' 🎮🚀

Inroduction

Hello, this is a2set AI NEWS.

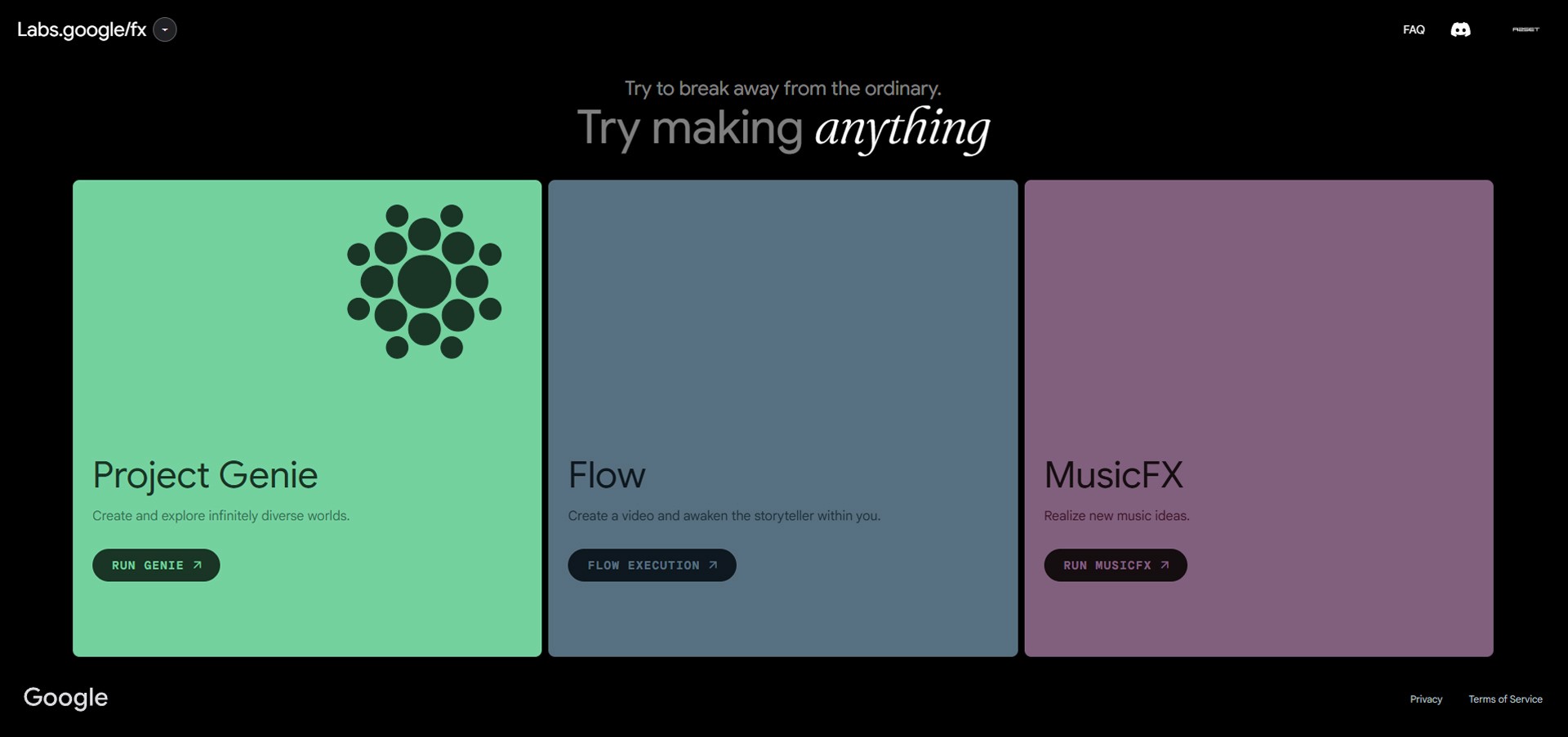

The pace of generative AI development has finally reached the realm of 'Interaction'. Google DeepMind has officially unveiled 'Project Genie', a Foundation World Model that generates playable 2D game worlds on the fly from a single text or image input.

While previous AIs generated text, images, or simply playback videos, Project Genie is delivering a massive shock to the global IT and gaming industries by rendering an 'environment where users can control and intervene with a keyboard' in real-time.

The a2set newsroom conducted an in-depth analysis of Project Genie's core technologies and its potential impact, which might bring an end to the era of coding.

🌟 Core Analysis: What Makes Project Genie Revolutionary?

Synthesizing Google DeepMind's published technical whitepaper and the Google Labs test environment, the three core innovations the industry is focusing on are as follows:

1. Zero Coding, 'Image-to-Game'

You don't need a single line of coding ability in Python or C#, nor any knowledge of game engines like Unity or Unreal. By simply uploading a wonky pen sketch drawn on a paper napkin, a landscape image generated by Midjourney, or a real-world photograph, the AI recognizes it and instantly converts it into a 2D platformer (side-scrolling) game map.

2. The World's First 'Interactive Video' Model

The most shocking part is that Genie does not move based on a mathematical coordinate system like a traditional game engine. Genie is, in fact, a 'video generation model'. The moment a user presses a directional key (left, right, jump) on the keyboard, the AI imagines the 'next frame (scene)' that would occur given that action and renders it as a continuous video in real-time. You are not just playing a game; you are watching a video being generated in real-time in response to your controls.

3. Mastering Physics via Unsupervised Learning

When training Genie, Google DeepMind provided absolutely no action labels (e.g., "this is a jump," "this is the ground") or code. They simply had it watch 200,000 hours of 2D gameplay videos existing on the internet. As a result, Genie reached a level where it could autonomously realize and infer the laws of physics, thinking to itself, "If a character floats in the air, gravity pulls it down in a parabola," and "Pixels of this color represent solid ground that can be stepped on."

💡 Technological Limitations and Current Test Status

Of course, the currently revealed Project Genie is still an early-stage research model (11B parameters).

Currently, it operates slowly at about 1 frame per second (1 fps) and is limited to short sequences of around 16 frames. Therefore, it is unreasonable to compare it to commercial games in terms of image quality and frame rate. Furthermore, testing is only permitted in limited environments such as the US region via Google Labs, restricting access for global creators. (In some regions, tests are being conducted using workarounds like VPNs.)

However, just like when the early model of Sora was revealed, this carries immense significance in that it has successfully proven the 'Proof of Concept'.

🎯 AI NEWS Insight: From 'Content Consumption' to 'World Creation'

The emergence of Project Genie suggests that the paradigm of AI has entered a completely new phase.

Moving beyond merely 'consuming' text or video, anyone can now 'create' their own interactive metaverse space or game world with just a single idea sketch. If this model undergoes optimization to support 3D environments and high frame rates, the solo developer and indie game markets will face an unprecedented cataclysm.

'An era where everything you imagine instantly becomes a reality you can interact with'—a2set AI NEWS will continue to swiftly track and report on the future of the new metaverse that Project Genie will open up.