The Completion of Emotionally Intelligent Conversational Infrastructure, ElevenLabs & ElevenAgents

Title

Beyond Simple Text Reading to 'Emotional Performance' and Autonomous Action: ElevenLabs' Innovation

Introduction

While past Text-to-Speech (TTS) technology was limited to unnatural intonations and mechanical robotic voices, 2026's 'ElevenLabs' has evolved into a massive 'audio layer' of the internet, perfectly recreating minute human emotions, pacing of breath, and situational nuances. Now, ElevenLabs has shaken off its label as a mere voice synthesis startup, aggressively taking over the enterprise customer service and productivity market with 'ElevenAgents'—an emotionally intelligent conversational AI platform that simultaneously reads visual information and documents, converses in real-time, and handles real-world tasks. Its maturity is so thoroughly verified that Deutsche Telekom, a global telecom giant, fully adopted this technology as a real-time call assistant at MWC 2026, the world's largest mobile exhibition.

Error Reduction Rate by Text Conversion Category (Eleven v3 model)

Category | Error Reduction (%) |

|---|---|

ISBN Numbers | 100% |

Chemical Formulas | 99% |

Phone Numbers | 99% |

URLs & Emails | 91% |

License Plates | 91% |

Mathematical Expressions | 71% |

Eleven v3 has nearly eliminated errors compared to previous model versions when pronouncing complex symbols and numbers that require contextual interpretation—such as chemical formulas, phone numbers, and ISBNs.

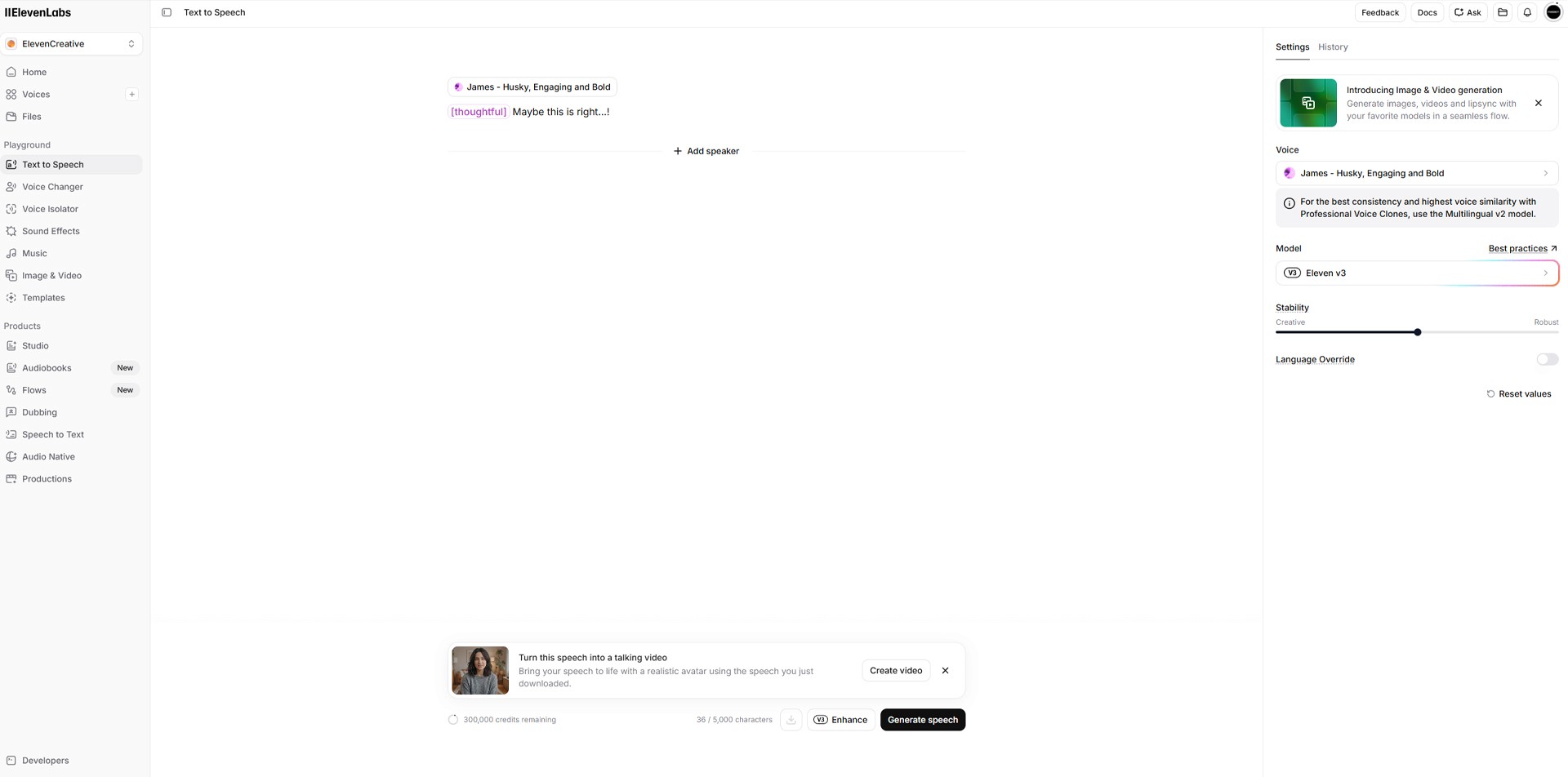

Data source: ElevenLabs

The most significant feature of ElevenLabs' latest flagship model, 'Eleven v3', officially released in February 2026, lies in its 'Text-to-performance' capabilities that go far beyond simply reading letters aloud. It fluently speaks over 70 languages globally at a native speaker level and has drastically improved upon the chronic errors frequently experienced by previous-generation models. According to internal benchmarks, the error rate dropped by an average of 68% when processing special text where context is essential, such as reading country-specific phone number formats or complex math and chemical formulas. An even more astonishing technological achievement is its support for 'Audio Tags' within text prompts—special bracketed commands like [whispers], [sighs], and [shouts]. This allows audiobook producers or podcasters to precisely adjust the AI's emotional tone and breathing timing down to the millisecond, much like a film director directing an actor.

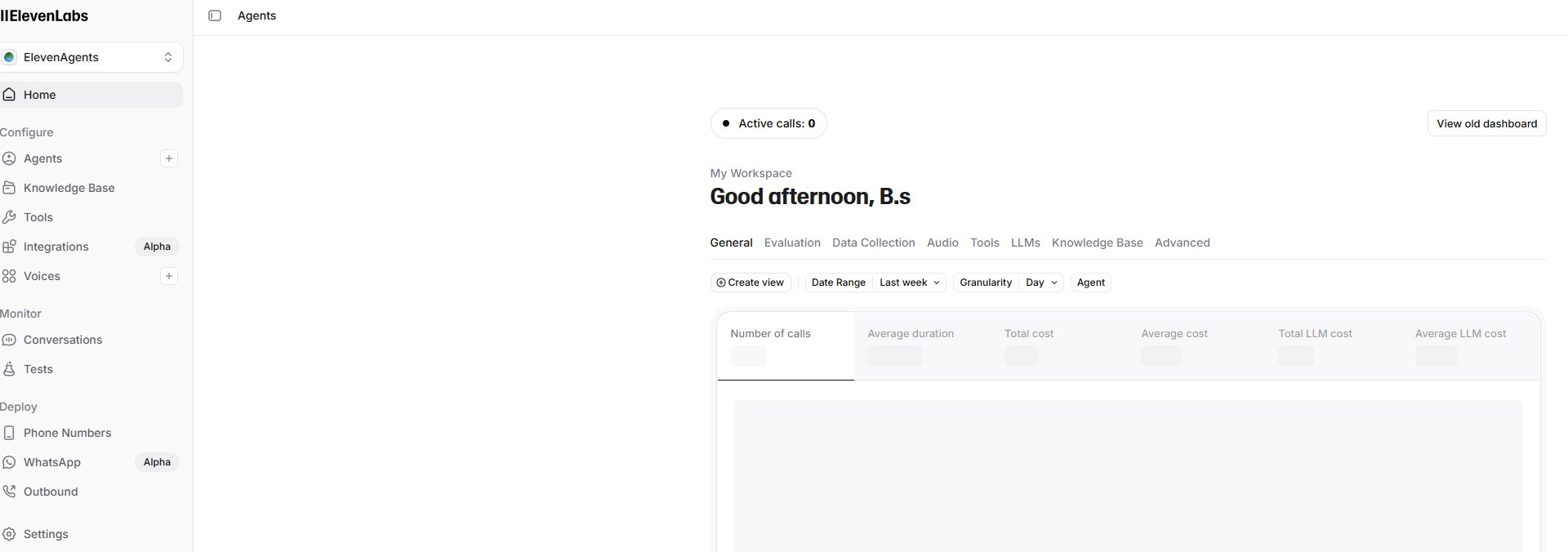

The combination of the 'ElevenAgents' platform, aimed squarely at the conversational AI market, and the ultra-fast reasoning model 'Flash v2.5' is shaking the foundation of the enterprise customer service environment. It has suppressed the latency it takes for the AI to hear a user and respond to an incredible 75ms (milliseconds), completely deleting the uncanny feeling that humans are talking to a machine. Notably, it is equipped with a proprietary 'Turn-taking' model, meaning that if a user suddenly jumps in or interrupts while the AI is speaking, it instantly recognizes this and naturally pauses to switch to listening mode, just like a human.

These agents do not merely answer the questions asked. The latest systems are equipped with multimodal reasoning abilities, allowing them to simultaneously 'read' a picture of a bill sent by a user via smartphone during a phone call, 'analyze' the nervous tone of the user's voice, and output an empathetic, comforting response. Its most powerful weapon is the 'Tool Calls' feature via API integration. Mid-conversation, the agent proactively calls the company's CRM system API to look up a customer's past order history, accesses the reservation system (using REST APIs, etc.) to confirm a calendar schedule, or sends a payment link via text message, autonomously executing real operational actions.

The biggest barrier preventing corporate adoption—the issue of 'reliability and security'—has also been perfectly resolved. ElevenAgents is the world's first voice AI platform to acquire 'AIUC-1 (Artificial Intelligence Underwriting Company)' insurance certification from an independent third-party organization. This certification is only granted after passing over 5,835 grueling adversarial tests across 14 risk categories, including hallucinations where the AI provides false information to customers, privacy data leaks, and system hacking. This has provided a strong legal and institutional foundation for financial insurance companies to financially underwrite and cover the operational errors of AI agents as if they were errors by full-time human employees, resulting in explosive adoption even in conservative banking and healthcare industries.

ElevenLabs API and Infrastructure Specs | Detailed Support Info |

|---|---|

Core API Product Suite | Text-to-Speech (TTS), Speech-to-Text (STT), Dubbing, Audio Native Player |

Developer Tools and SDKs | Supports Python, JS/TS, Flutter, Swift; provides ultra-low latency streaming environments via WebSocket |

Enterprise Security & Compliance | SOC 2, HIPAA, GDPR certified; supports encrypted transit and Zero Retention data modes |

Billing Structure | TTS (character-based), STT & Dubbing (audio-minute based), enterprise custom SLAs provided |

Review or Expectations

The peculiar mechanical strangeness unique to voice-based AI, known as the 'Uncanny Valley,' has been completely conquered by ElevenLabs. Currently, countless entrepreneurs and authors are running podcasts and publishing lecture audiobooks through 'Digital Twins' that perfectly clone their voices by simply typing text scripts, without ever stepping near a recording studio.14 On the business side, tasks requiring immense emotional labor, from night-shift emergency hospital call centers to bank overdue payment collection calls, are now handled by tireless AI agents 365 days a year. Within the next few years, it is expected that every hardware interface in the world, including smart home speakers, car infotainment systems, and unmanned kiosks, will adopt this powerful and emotional voice platform.